How it works

How Vibes Works

Designed to help teams respond with empathy, deliver trust, and build stronger relationships at scale.

Analyzes every few seconds

Conversation occurring close to the watch gets analyzed on a regular basis, making sure the result is always up to date.

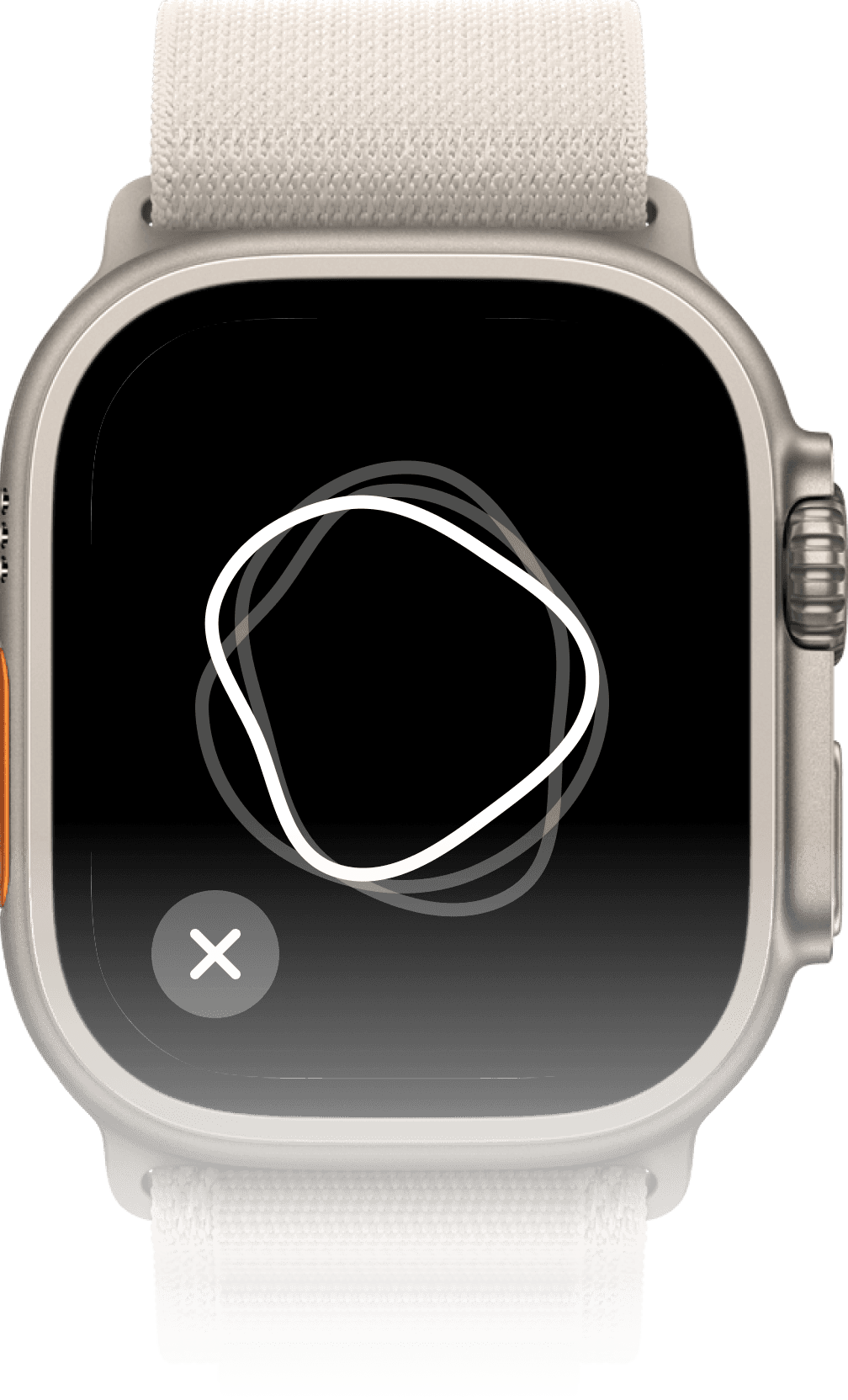

Confidently interprets the emotion

Once the watch recognizes the correct emotion confidently, a custom tap and animation will notify you.

Emotion recognition

Recognizes 7 Basic Emotions

See Vibes in Action

Used and trusted by the community

Frequently Asked Questions

What data does Vibes take in?

Vibes uses only on-device processing, so your vocal data and emotional classifications will never be accessed by us or a third party because they do not leave your device. Your watch will temporarily record brief intervals of speech to analyze the emotional content, but they are automatically deleted from your device within seconds, so you will not be able to access them either.

How much does Vibes cost?

Vibes for Apple Watch costs $8.99 per month (with a one week free trial) or $89.99 per year on the App Store. We currently have a promotion running for 44% off the first year for yearly subscriptions, costing $49.99.

Which devices are supported by Vibes?

Currently, Vibes is only available for Apple Watches Series 4 and later. An iPhone standalone app is coming soon, and in the future we’d like to support other smart watches and platforms.

Which languages or accents are currently supported?

Vibes currently only supports North American English speakers, including age, gender, race, neurotype, geography and communication style diversity within North American English. More languages and accents are coming soon!